Most world languages don’t have the abundant resources of texts in electronic form that computer scientists have used to create the now-widely used programs that turn (for example) English into Chinese.

Now, Kevin Knight of ISI, who helped pioneer these earlier systems, is working as part of a multi-university team in a five-year effort to find less statistical, more semantic points of attack on ‘low-density’ languages, starting with some spoken in Africa.

Now, Kevin Knight of ISI, who helped pioneer these earlier systems, is working as part of a multi-university team in a five-year effort to find less statistical, more semantic points of attack on ‘low-density’ languages, starting with some spoken in Africa.

The strategy aims not only at developing a paradigm for creating new translation systems for such languages, but also at improving the state of the art of machine translation for the traditional high-density targets.

It may even lead toward partial realization of a long held dream: finding a consistent path through the wild variations in natural human languages to a common core of meaning – a vision referred to in the project’s description, “The Linguistic-Core Approach to Structured Translation and Analysis of Low-Resource Languages.”

Statistical translation, according to the research summary for the MURI (Multidisciplinary University Research Initiative) project recently funded by the U.S. Army Research Office, is based on glueing together phrases, found in computer searches of huge volumes of parallel texts, into sentences.

But “even systems trained on large parallel document collections mistranslate simple sentences. This is not surprising: current machine translation systems have limited knowledge of linguistic structure and thus cannot effectively capture translation patterns.

“New advances will require deeper, more linguistically-realistic models of translation, integrating what we know about how syntax, word formation, and semantics operate across a wide range of natural language,” the research summary notes.

As stated in the proposal, the goal is to dramatically expand existing capabilities to process low-resource and typologically diverse languages by attempting to:

Kevin Knight

These new method grows out of beyond-statistical, syntax-based translation refinements that Knight, an ISI fellow and project leader in ISI's Natural Language Group who is also a research associate professor in the Viterbi School Department of Computer Science, have developed in the past 6 years. Working mostly on Chinese-English texts, Knight and his colleagues have developed methods that now outperform the simpler statistical versions.

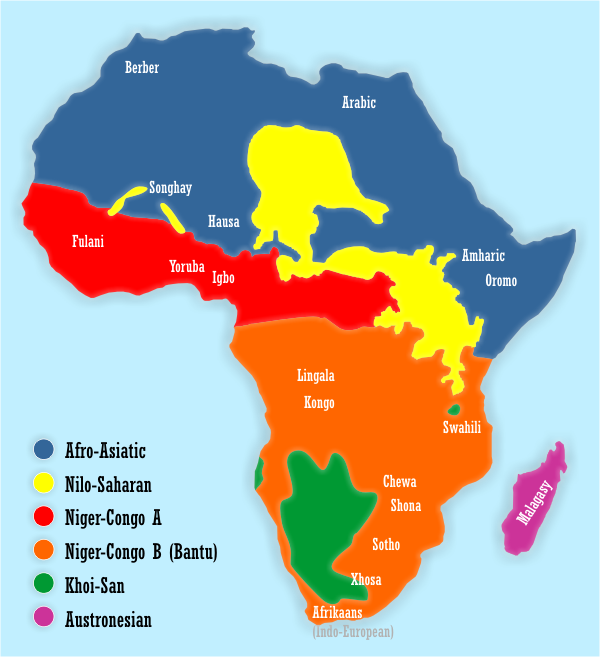

The effort will attempt to incorporate into the systems the kind of information that human learners of a new language must learn – the wild variations in grammatical structure that characterize symbolic speech. “Bantu languages have around 18 noun classes,” the introduction notes. “This is akin to having 18 genders, but instead distinguishing animals, man-made artifacts, etc.”

Enormous as the diversity is, comparative linguistics aided by computer analysis tools has made progress finding common threads and parallelism in the profusion of grammars. The new project will attempt to try to use this progress to help improve machine translation. For example: languages come in families, which display obvious similarities in vocabulary (English ‘home’ Russian ‘dom’ Latin ‘domus’ Sanskrit ‘dama’) and other features. The project will try to build information from these relationships into its modeling.

In addition to advancing machine translation technology, a major aim is creation of machine translation prototypes for African languages like Kinyarwanda and Malagasy, which have not been researched as much as many other languages. The project will continue for five years.

In addition to the USC Viterbi School’s ISI, the other participanting institutions include Carnegie Mellon University's Language Technologies Institute, whose director, Jaime Carbonell is the lead researcher on the project; the University of Texas at Austin's Linguistics Department, and the Massachusetts Institute of Technology EECS Department. David Chaing of ISI will also be working with Knight.